If you’ve ever maintained a single API client library, you know the drill: new endpoints ship, edge cases appear, users report bugs, and suddenly your “small” SDK becomes a product of its own. Now multiply that by 11 languages, a growing set of APIs, and multiple framework integrations. That’s where we found ourselves at Algolia.

In my DevBit 2025 talk, I framed it as a joke: “How many engineers does it take to maintain 11 API clients? 11 engineers.” The real answer is more interesting — because the only sustainable way we found wasn’t scaling headcount, it was scaling automation.

This post is the story of how we went from “100+ packages to maintain” to a system where a small team can keep our SDK ecosystem consistent, tested, documented, and shipped — with one source of truth.

The real problem wasn’t 11 languages

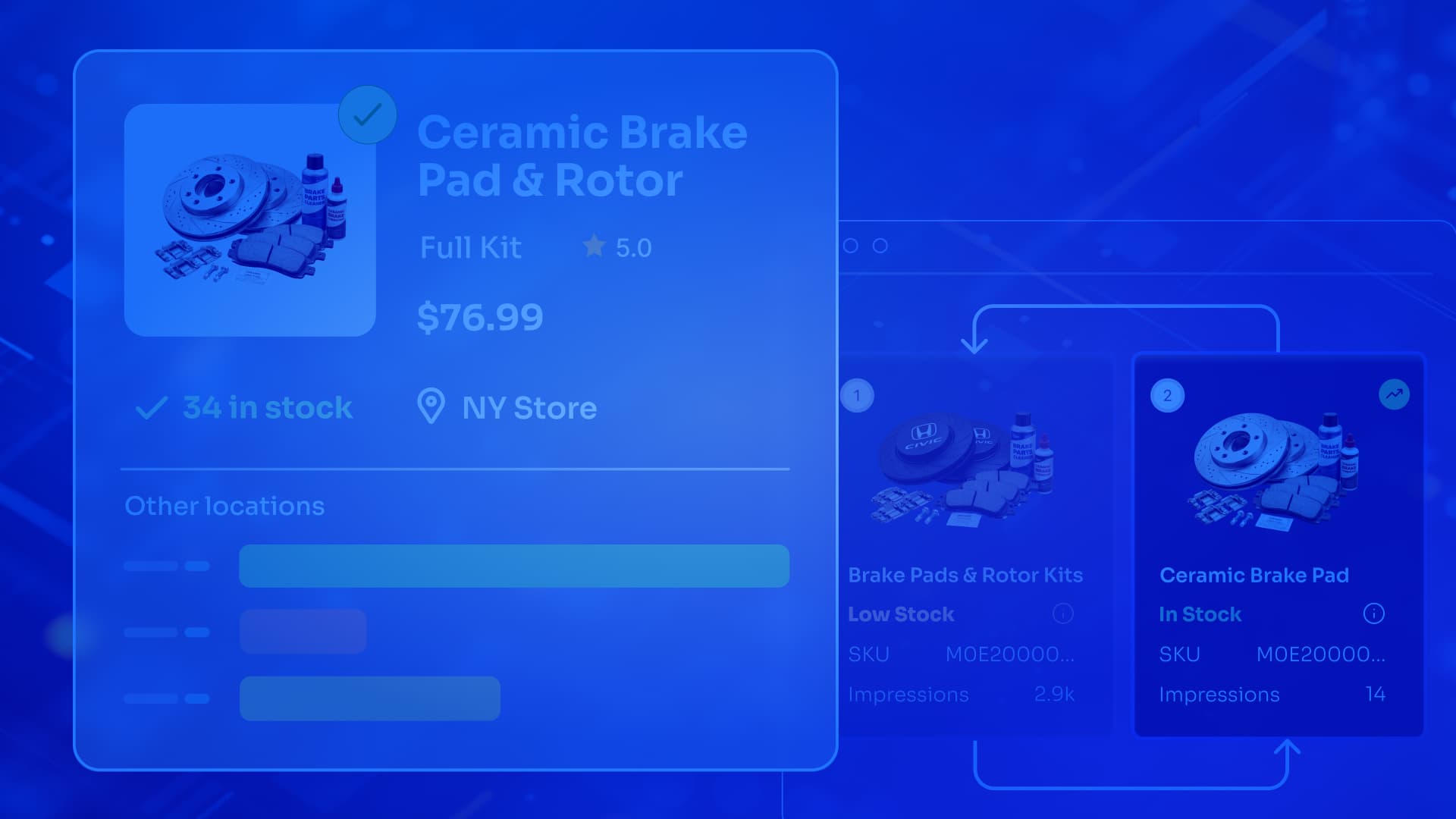

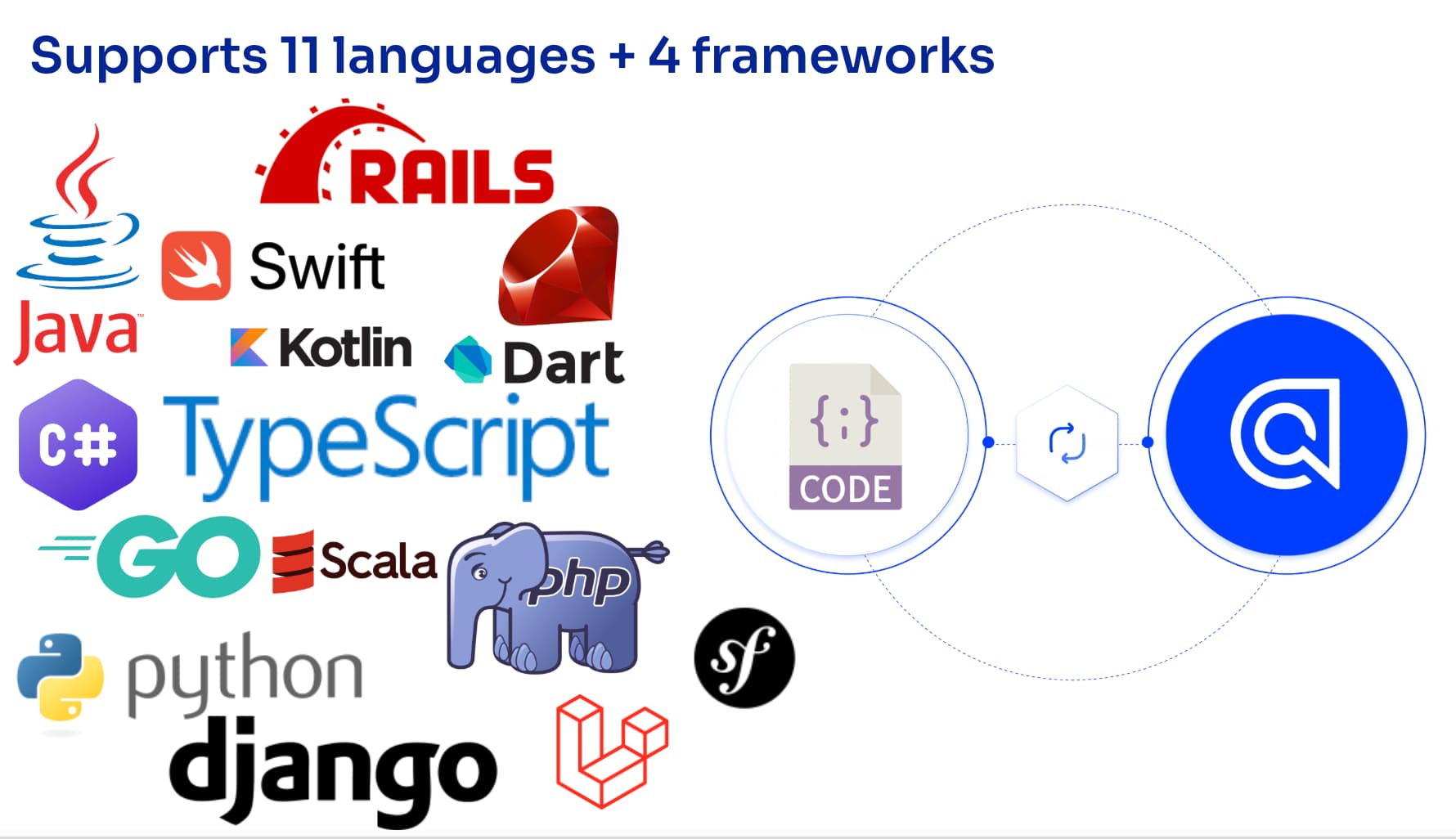

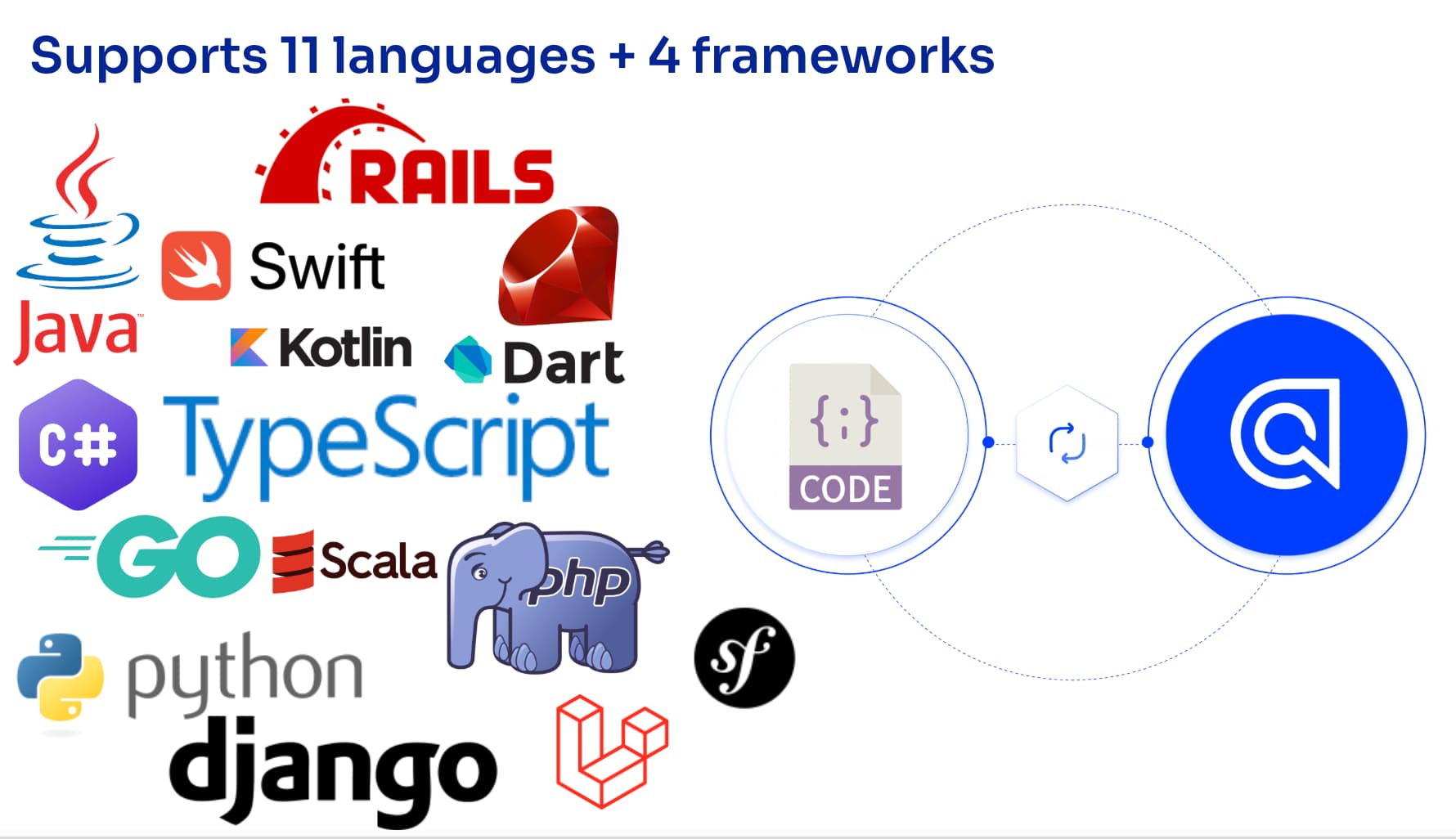

Supporting 11 languages is great for developers:

- JVM-based: Java, Kotlin, Scala, Dart

- Scripting: TypeScript, Python, Ruby, PHP

- Compiled: Swift, Go, C#

- Plus framework packages like Rails, Django, Symfony

But on the maintenance side, that’s where the math gets brutal. Algolia is API-first, and we’re no longer “just search.” We also ship products like NeuralSearch, Recommendations, Analytics, and more. Every new capability adds surface area across the SDKs. In practice, “support 11 languages” turns into something like:

- many APIs

- times many languages

- plus framework wrappers

- plus bugs, consistency issues, release cadence, docs…

It quickly becomes 100+ packages that need to build, stay consistent, and evolve together. So we tried to reason through the obvious organizational solutions and why they didn’t work. We considered a number of approaches:

Option 1: one dedicated team per language

This is the classic approach: a Go team, a Python team, a Swift team, and so on.

The tradeoffs hit fast:

- High cognitive load: each team still needs to understand every API well enough to implement it correctly.

- Idle time: when API work slows, SDK specialists can’t always stay meaningfully utilized.

- Cost: the headcount scales linearly with the number of languages.

Option 2: embed engineers for each API team

We also considered flipping it: make API teams responsible for updating SDKs when they ship features. But this creates a different set of problems:

- Context switching: API teams focus on backend behavior, not SDK ergonomics and edge cases.

- “Jack of all trades” risk: people end up being experts in no language’s quirks.

- Cross-cutting changes get messy: who owns shared concerns like retry strategy, consistency rules, or test frameworks?

At some point, we realized we weren’t missing a better org chart. We were missing a better system.

OpenAPI gave us a lever

We needed a standard way to describe our APIs that could drive automation end-to-end. If only there was a standard… a technology that could take a description of an API on one hand, and on the other transform them into some code that could call these APIs…?

That lever we realized was OpenAPI’s widely adopted standard for describing APIs in machine-readable YAML/JSON — endpoints, parameters, auth, schemas, responses, everything.

But the important part wasn’t “OpenAPI generates clients.” The important part was, if we treat the API spec as the source of truth, we can automate not only SDK generation — but also validation, testing, documentation, and release.

That’s the path we took.

Our pipeline

We built a centralized pipeline (driven from a CLI, containerized via Docker) that orchestrates the full lifecycle.

1. Start with solid specs (the baseline)

Everything depends on the quality of the OpenAPI specs. We did the unglamorous work of writing one spec per API, covering behavior from A to Z, and sticking as closely as possible to the standard. It took time, but it established the foundation we needed.

2. Generate SDK code with custom generators and templates

OpenAPI’s default generators are useful, but they’re generic. We needed clients that felt like “real” native SDKs across 11 ecosystems. So we built and customized:

- Custom generators (extending OpenAPI tooling)

- Mustache templates for language- and style-specific output (casing, parameters, idioms)

At that point, we crossed a threshold where we weren’t writing SDKs anymore and instead we were writing code that writes SDKs. The payoff is immediate:

- when an API evolves, regeneration updates every SDK quickly

- when we fix a bug in generation logic, it’s fixed across all languages at once

- generated code is consistent by default

3. Format everything so diffs and changelogs stay sane

One practical thing we learned: generated code still needs formatting discipline. IDEs and language servers “fix things for you” in hand-written code (whitespace, style normalization, removing useless returns, etc.). In generated code, that step must be part of the pipeline — otherwise diffs get noisy and changelogs become painful. Formatting is built into the process.

4) Accept the 80/20 reality: not everything should be generated

Even with strong generation, we still write manual code and roughly falls into the Pareto Principle:

- ~80% generated from spec and templates

- ~20% manual for language or Algolia-specific behavior

The two examples I called out in the talk were Python sync + async clients which required manual structure to support well, and strong typing / generics (Java, TypeScript, etc.) which required careful language-specific handling to return records that match users’ expected types.

And then there are Algolia-specific client behaviors like…

5) Bake in Algolia-specific concerns (like retry strategy)

One SDK concern we treat as first-class is retry behavior: automatically retrying failing requests based on error codes, using an exponential backoff strategy. That logic needs to be consistent across every SDK. The pipeline makes it a shared, enforceable behavior rather than 11 separate implementations that drift over time.

Extending the model for tests

Generated code is good. Generated code with confidence is better. So we extended the model:

- specs are JSON/YAML

- generators output client code

- generators also output tests

This includes:

- lots of unit tests (we’re near ~1,000)

- end-to-end tests that hit real Algolia indices to validate real behavior

Our CLI can run the full suite across languages and APIs in parallel, which means we can detect regressions early and keep behavior aligned across SDKs. This is also where the idea of a common test suite really matters: we want equivalent behavior validated consistently, even when languages behave differently.

Docs and release aren’t afterthoughts: they’re generated too

Once code generation and test generation worked, we added the final step of release automation, and we treated “understandability” as part of release quality. The pipeline also generates:

- SDK documentation that stays aligned with what the SDK actually does

- code samples/snippets we can embed in docs (and even places like dashboards)

Then it releases SDKs to the appropriate package managers per language, including handling multi-platform builds where needed (for example, Swift and Kotlin targets across macOS and Linux). The whole pipeline runs end-to-end in under ~20 minutes. That fast feedback loop changes everything. It makes it realistic to keep 11 SDKs aligned without heroic effort.

So how many engineers does it take?

Here’s the more honest answer to the opening joke:

- It doesn’t take 11 engineers to hand-maintain 11 SDKs.

- It takes a small team to maintain the automation pipeline that maintains the SDKs.

In the talk, we put that number at three engineers for ongoing pipeline maintenance — with an important caveat: that doesn’t include the historical investment to build it, and it absolutely doesn’t discount the open source tools and communities we built on.

What’s next: letting more teams ship clients through the pipeline

If I were to boil it down to just two lessons it’s that:

- Don’t rewrite what the ecosystem already solved: OpenAPI is widely used for a reason. There’s a huge community behind it, and when you hit a problem, chances are someone has already explored it. We built on “shoulders of giants,” then customized what we needed.

- Don’t try to automate everything: Automation is powerful, but there will always be a portion that needs human judgment — language quirks, SDK ergonomics, and product-specific behavior. Accepting that 80/20 split is what made the system practical.

One of the most exciting questions from the Q&A was whether an automated system like this can help other teams generate their own client specs.

Yes — and that’s where we want to go next.

Our goal is to make it easy for internal teams to onboard to the pipeline, generate even small/private clients while incubating features, and then graduate those specs into the public SDKs once the feature is ready.

We have open sourced this project; check out our API Clients Automation on GitHub. You can also watch the presentation recording below:

.jpg)